AI’s Nefarious Role in Increasingly Realistic Phishing Campaigns

In 2024, it’s hard to traverse the Web without being made aware of the increasing presence of artificial intelligence (AI). While recent advances in both algorithms and model training have brought a bevy of benefits to the digital world, bad actors have also incorporated AI-powered tradecraft into their phishing campaigns.

Let’s Define Our Terms

Given the ubiquity and broad semantic range of many terms used in this post, let’s go ahead and define what we’re talking about before getting to our core content.

AI - in this context, AI refers to systems of information delivery that are able to produce new content based on knowledge of a specific domain (also referred to as generative AI). For instance, we could train an AI model on the Python programming language that would allow us to request specific code snippets for a given scenario.

Deepfake - a deepfake refers to the use of AI to create or alter content (usually video or still images) with the purpose of depicting a scenario that never actually occurred. For instance, nefarious actors might create a short but convincing video of a politician stating outlandish claims about an opponent in order to sow discord among a group of people.

Large Language Model (LLM) - an LLM is a type of AI model that has been trained on massive amounts of text from the Internet and can produce human-like responses to input prompts.

Phishing - phishing occurs when an attacker attempts to deceive individuals into divulging sensitive information. Typically, the goal of a phishing attack is to pilfer financial information or login credentials. For instance, a perpetrator may send crafted emails to a company in hopes that employees will click on links in the email and enter their login credentials on a phony website.

AI-Related Phishing Stories

In a recent Ars Technica story, an employee in Hong Kong was tricked into sending approximately $25 million USD to scammers. How? A deepfake was used to simulate the company’s CFO as well as several other employees. The employee met with the “CFO” and the others in a group video call, then—convinced by those he believed to be real people—wired the money to the scammers.

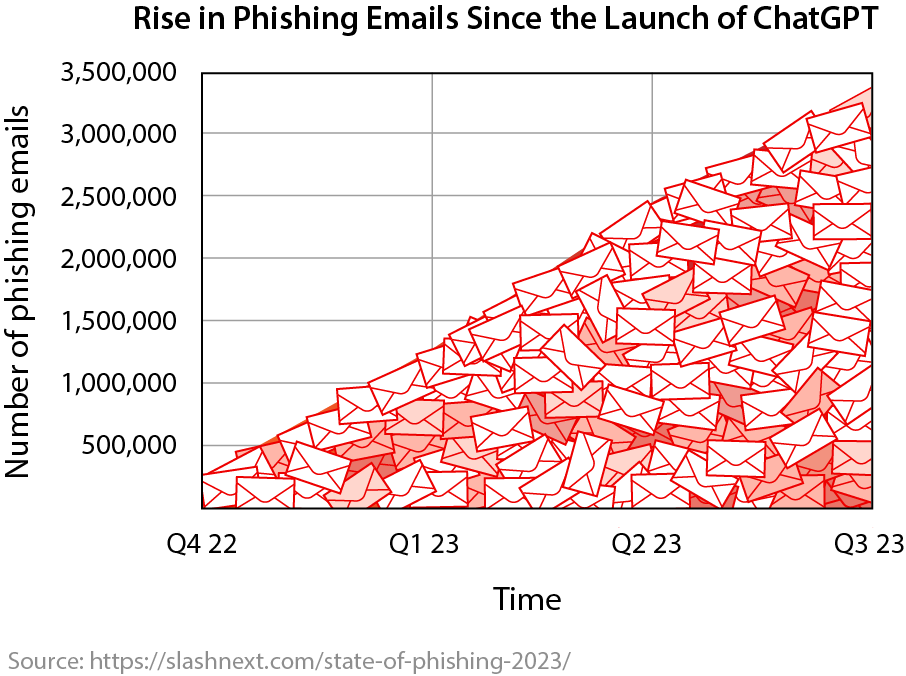

Apart from this atypical scenario, it’s becoming almost impossible to detect whether a phishing email has been drafted by an AI source. Because many emails are produced by LLMs, the spelling and grammar are likely to be quite believable and reasonable. SlashNext (a company that specializes in blocking phishing in a wide range of applications), states that there has been an increase of 1,265% in phishing emails since the launch of ChatGPT. Additionally, AI detectors cannot reliably classify almost 72% of attacks because their contents are too short to accurately determine their source, according to Egress (a cloud email security platform). Egress reports that 45% of phishing emails fall under a standard 250-character limit and 27% don’t even reach 500 characters.

But How is This Happening?

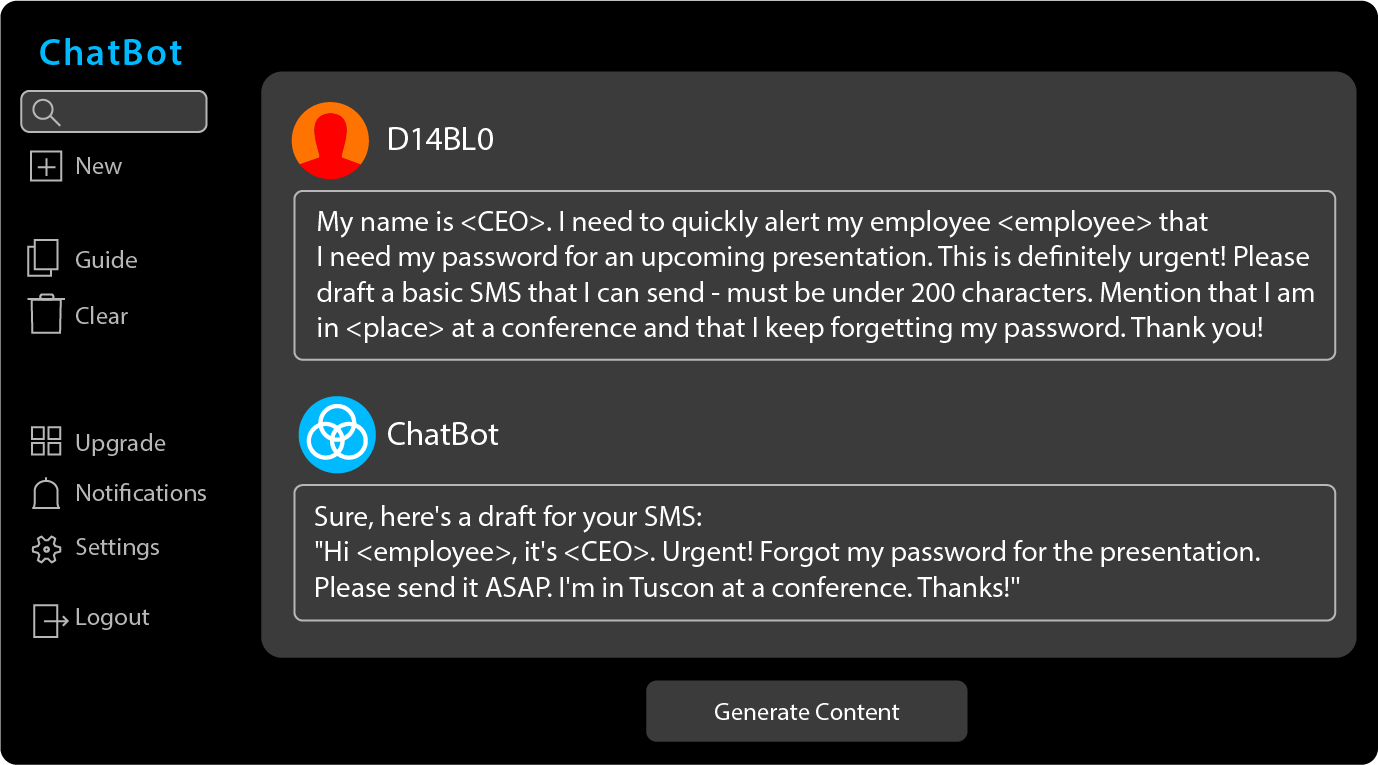

LLMs powering conversational chat applications abound on the Internet, but not all are used for noble purposes. ChatGPT, in particular, can be used by bad actors to craft malicious content precisely targeted at employees in a specific business or industry.

For instance, let’s imagine we want to craft a very simple SMS message to potentially lure someone into divulging a password. A quick visit to LinkedIn can provide the names of both the CEO of the target company and the CEO’s executive assistant. A little more investigation might reveal the current conference which the CEO is attending. A basic prompt to ChatGPT might produce a convincing text message:

It’s not difficult to imagine a scenario similar to the fictitious one above. ChatGPT readily drafted a reasonable text message, which could be used by scammers to potentially get a foothold in an organization.

Obviously, this is an abuse of ChatGPT’s functionality - a bug, not a feature. However, two services in underground markets arose with the express intent of drafting convincing phishing messages. FraudGPT and WormGPT both share the goal of creating convincing messages to be used by hackers for compromising their targets. These services can be purchased as subscriptions.

Here’s another scenario that can feasibly take place: a hacker who doesn’t speak a target’s language could use one AI tool to translate a message and another AI tool (like FraudGPT or WormGPT) to make the message more convincing. This democratization and low barrier to entry has made phishing a more credible threat than ever before.

Is There Anything We Can Do About It?

Yes… and no.

For the “yes”, there are typical answers given by cybersecurity professionals:

- Train your employees on how to practice good email safety. For example, don’t click on links you aren’t sure are safe, and verify with the sender (over the phone, for instance) if you think something is amiss.

- Employ a service (for instance, Office 365 or Google Workspace) that has built-in detection measures to keep employees safe.

However, a new precaution is growing in popularity - the use of a threat service which itself uses AI to detect and alert on “phishy” emails. Some of these integrate into the aforementioned services to provide a higher degree of protection (albeit with the added cost of their service, of course).

Concerning the “no, we can’t do anything about it”, we are now at a point where typical email misspellings and bad grammar might soon be a thing of the past. Red flags were obvious when receiving an email that said “pLeas sent me you passwrd - thx!”, but when messages arrive from credible sources using credible language… now, that’s a whole new territory. Given the above statistics from Egress about the capability of AI detectors on very short email messages, it’s easy to see that more innovation is needed to keep frontline workers safe from the wiles of online scammers.

Conclusion

Threat actors have boarded the AI train and are utilizing advances in language processing to not only trick humans but also to fool security solutions. At this point in time, there’s no silver bullet to keep all phishing messages out of inboxes or messaging apps. The constant cat-and-mouse games of hackers vs. cyber defenders will play out in this new AI age as it has for the past few decades - albeit, this time, the hackers have some pretty knowledgeable “friends” to consult for their mischievous deeds.

Thanks for reading!

Resources Worth Checking Out

If you’d like to do some more phishing fishing (pardon the pun), there’s plenty to “hook” in the following resources:

- Egress: Phishing Threat Trends Report

- SlashNext: The State of Phishing 2023

How can SimSpace help you prepare for AI-assisted phishing campaigns?

Using the SimSpace Cyber Force Platform, organizations can quickly build out a scaled-down replica of their environment including their security stack. The replica cyber range can be used to test out a variety of configurations and policies. These evaluations allow cyber defenders to determine the structure most suitable for combatting modern phishing campaigns as well as investigating new cybersecurity solutions. Our live-fire team assessment exercises can also include AI-assisted phishing campaigns as part of the simulated attacks to ensure your cyber defense teams are ready to respond to real-life threats.

Take the next step toward continuous security improvement

With SimSpace, you can assess

and optimize your complete

security posture — all in one platform

Stay connected

to SimSpace

Want to stay on top of the latest SimSpace

and cybersecurity news and updates?

Please enter your email below

By filling out this form, you agree to SimSpace's terms of use and privacy policy